- Hands-On Deep Learning for Games

- Micheal Lanham

- 232字

- 2021-06-24 15:47:58

The need for Dropout

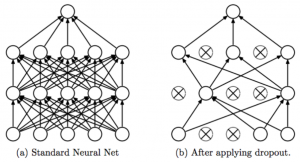

Now, let's go back to our much-needed discussion about Dropout. We use dropout in deep learning as a way of randomly cutting network connections between layers during each iteration. An example showing an iteration of dropout being applied to three network layers is shown in the following diagram:

Before and after dropout

The important thing to understand is that the same connections are not always cut. This is done to allow the network to become less specialized and more generalized. Generalizing a model is a common theme in deep learning, and we often do this so our models can learn a broader set of problems, more quickly. Of course, there may be times where generalizing a network limits a network's ability to learn.

If we go back to the previous sample now and look at the code, we see a Dropout layer being used like so:

x = Dropout(.5)(x)

That simple line of code tells the network to drop out or disconnect 50% of the connections randomly after every iteration. Dropout only works for fully connected layers (Input -> Dense -> Dense) but is very useful as a way of improving performance or accuracy. This may or may not account for some of the improved performance from the previous example.

In the next section, we will look at how deep learning mimics the memory sub-process or temporal scent.