- Hands-On Deep Learning for Games

- Micheal Lanham

- 268字

- 2021-06-24 15:47:58

Memory and recurrent networks

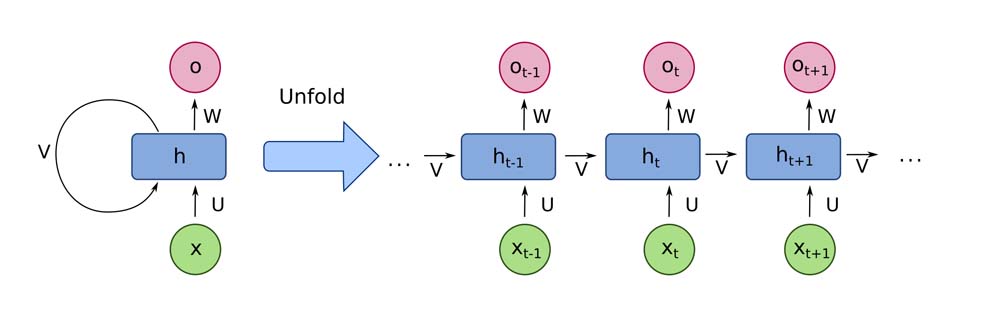

Memory is often associated with Recurrent Neural Network (RNN), but that is not entirely an accurate association. An RNN is really only useful for storing a sequence of events or what you may refer to as a temporal sense, a sense of time if you will. RNNs do this by persisting state back onto itself in a recursive or recurrent loop. An example of how this looks is shown here:

What the diagram shows is the internal representation of a recurrent neuron that is set to track a number of time steps or iterations where x represents the input at a time step and h denotes the state. The network weights of W, U, and V remain the same for all time steps and are trained using a technique called Backpropagation Through Time (BPTT). We won't go into the math of BPTT and leave that up the reader to discover on their own, but just realize that the network weights in a recurrent network use a cost gradient method to optimize them.

A recurrent network allows a neural network to identify sequences of elements and predict what elements typically come next. This has huge applications in predicting text, stocks, and of course games. Pretty much any activity that can benefit from some grasp of time or sequence of events will benefit from using RNN, except standard RNN, the type shown previously, which fails to predict longer sequences due to a problem with gradients. We will get further into this problem and the solution in the next section.

- MySQL高可用解決方案:從主從復制到InnoDB Cluster架構

- Libgdx Cross/platform Game Development Cookbook

- Hadoop大數據實戰權威指南(第2版)

- 大數據架構和算法實現之路:電商系統的技術實戰

- Starling Game Development Essentials

- ZeroMQ

- 深入淺出 Hyperscan:高性能正則表達式算法原理與設計

- 大數據精準挖掘

- 數據科學實戰指南

- 大數據技術原理與應用:概念、存儲、處理、分析與應用

- 活用數據:驅動業務的數據分析實戰

- 計算機視覺

- Deep Learning with R for Beginners

- 企業級大數據項目實戰:用戶搜索行為分析系統從0到1

- 算力芯片:高性能CPU/GPU/NPU微架構分析